As we embark on our journey into the detailed world of backlink analysis and strategic planning, it is crucial to articulate our core philosophy. This foundational understanding serves to simplify our methodology for developing impactful backlink campaigns, ensuring we maintain a clear and focused approach as we explore the topic in greater depth.

Within the domain of SEO, we advocate for prioritizing the reverse engineering of our competitors' strategies. This essential step not only reveals valuable insights but also shapes the action plan that will steer our optimization efforts effectively.

Navigating the intricacies of Google's sophisticated algorithms can prove to be a daunting task, as we often rely on limited indicators such as patents and quality rating guidelines. While these resources can ignite innovative SEO testing ideas, it is imperative to approach them with a critical mindset and avoid taking them at face value. The relevance of older patents in the context of today's ranking algorithms remains uncertain, making it vital to gather insights, conduct thorough tests, and substantiate our assumptions with current data.

The SEO Mad Scientist operates like a detective, using these clues as the foundation for creating tests and experiments. While this theoretical understanding is indeed beneficial, it should only represent a minor component of your comprehensive SEO campaign strategy.

Next, we turn our attention to the significance of competitive backlink analysis, which is paramount in our endeavors.

I assert with confidence that reverse engineering successful elements within a SERP represents the most effective strategy for guiding your SEO optimizations. This method stands unmatched in its efficacy.

To further illustrate this principle, let’s revisit a fundamental concept from seventh-grade algebra. Solving for ‘x,’ or any variable, necessitates evaluating existing constants and applying a series of operations to unveil the value of the variable. We can scrutinize our competitors' strategies, examining the topics they address, the links they secure, and their keyword densities.

However, while collecting hundreds or thousands of data points may seem advantageous, much of this information might not yield significant insights. The true benefit of analyzing larger datasets lies in pinpointing shifts that correlate with changes in rank. For many, a concentrated list of best practices gleaned from reverse engineering will be sufficient for effective link building.

The concluding aspect of this strategy involves not merely achieving parity with competitors but also striving to surpass their performance. This approach may appear broad, especially in highly competitive niches where reaching the standards of top-ranking sites might take considerable time, but achieving baseline parity is merely the initial phase. A comprehensive, data-driven backlink analysis is indispensable for success.

Once you have established this baseline, your objective should be to outshine competitors by providing Google with the appropriate signals to enhance rankings, ultimately securing a prominent position in the SERPs. Unfortunately, these essential signals often come down to common sense in the realm of SEO.

While I find this notion somewhat displeasing due to its subjective nature, it is crucial to acknowledge that experience and experimentation, alongside a proven history of SEO success, contribute to the confidence required to identify where competitors falter and how to address those gaps in your planning process.

5 Strategic Steps to Elevate Your SERP Performance

By delving into the intricate web of websites and links that shape a SERP, we can unveil a treasure trove of actionable insights that are invaluable for crafting a robust link plan. In this segment, we will systematically arrange this information to identify vital patterns and insights that will enhance our campaign.

Let’s take a moment to elaborate on the rationale behind organizing SERP data in this manner. Our approach emphasizes conducting a deep dive into the leading competitors, offering a thorough narrative as we explore further.

Conduct a few searches on Google, and you will quickly encounter an overwhelming number of results, often exceeding 500 million. For instance:

Although our primary focus is on the top-ranking websites for our analysis, it’s essential to note that the links directed towards even the top 100 results can hold statistical significance, provided they meet the criteria of being neither spammy nor irrelevant.

My aim is to gather expansive insights into the factors that sway Google's ranking decisions for top-ranking sites across various queries. With this knowledge, we can better formulate effective strategies. Here are just a few objectives we can accomplish through this analysis.

1. Uncover Key Links Impacting Your SERP Landscape

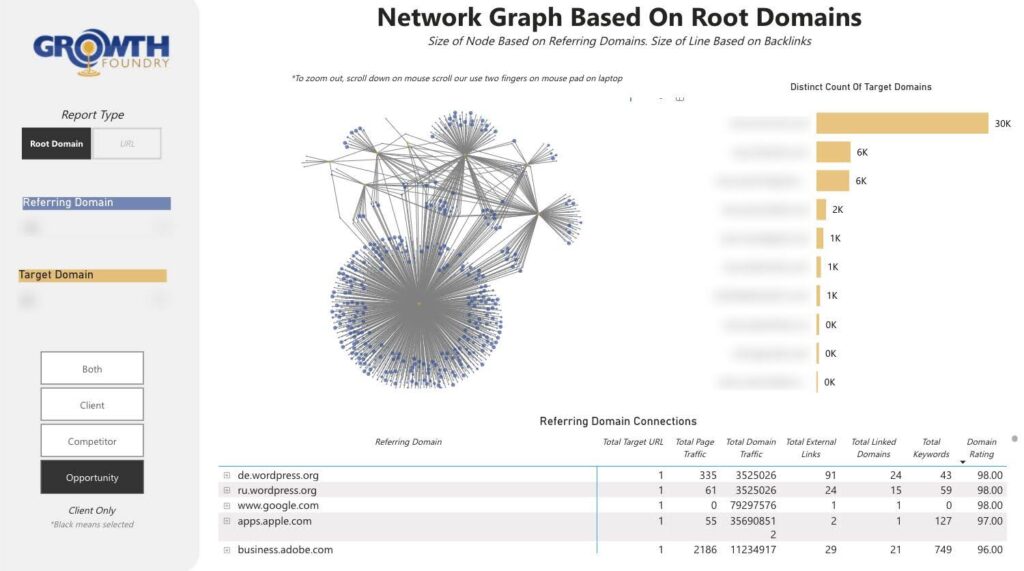

In this context, a key link refers to a link that consistently appears in the backlink profiles of our competitors. The accompanying image illustrates this, showcasing that certain links direct to nearly every site in the top 10. By analyzing a broader spectrum of competitors, you can uncover even more intersections similar to the one depicted here. This method is supported by robust SEO theory, as endorsed by numerous reputable sources.

- https://patents.google.com/patent/US6799176B1/en?oq=US+6%2c799%2c176+B1 – This patent enhances the original PageRank concept by integrating topics or context, acknowledging that different clusters (or patterns) of links have varying significance depending on the subject area. It serves as an early example of Google refining link analysis beyond a singular global PageRank score, suggesting that the algorithm detects patterns of links among topic-specific “seed” sites/pages and utilizes that to adjust rankings.

Essential Quotes for Effective Backlink Analysis

Implication: Google identifies distinct “topic” clusters (or groups of sites) and employs link analysis within those clusters to generate “topic-biased” scores.

While it doesn’t explicitly state “we favor link patterns,” it indicates that Google examines how and where links emerge, categorized by topic—a more nuanced approach than relying on a single universal link metric.

“…We establish a range of ‘topic vectors.’ Each vector ties to one or more authoritative sources… Documents linked from these authoritative sources (or within these topic vectors) earn an importance score reflecting that connection.”

Insightful Excerpts from Original Research Papers

“An expert document is focused on a specific topic and contains links to numerous non-affiliated pages on that topic… The Hilltop algorithm identifies and ranks documents that links from experts point to, enhancing documents that receive links from multiple experts…”

The Hilltop algorithm aims to identify “expert documents” for a topic—pages recognized as authorities in a specific field—and analyzes who they link to. These linking patterns can convey authority to other pages. While not explicitly stated as “Google recognizes a pattern of links and values it,” the underlying principle suggests that when a group of acknowledged experts frequently links to the same resource (pattern!), it constitutes a strong endorsement.

- Implication: If several experts within a niche link to a specific site or page, it is perceived as a strong (pattern-based) endorsement.

Although Hilltop is an older algorithm, it is believed that aspects of its design have been integrated into Google’s broader link analysis algorithms. The concept of “multiple experts linking similarly” effectively shows that Google scrutinizes backlink patterns.

I consistently seek positive, significant signals that recur during competitive analysis and aim to leverage those opportunities whenever feasible.

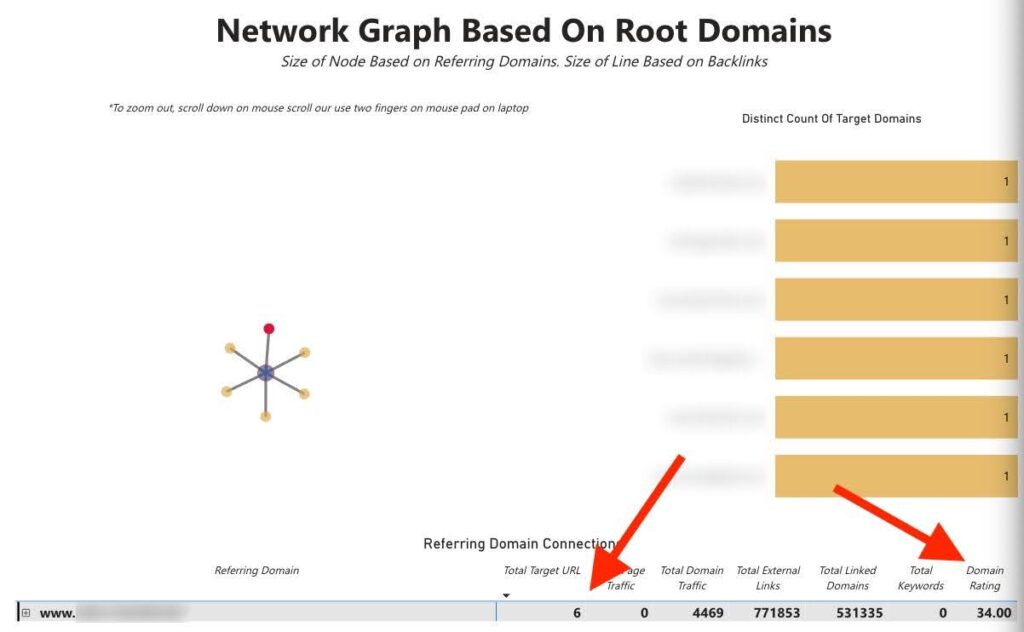

2. Backlink Analysis: Harnessing Unique Link Opportunities through Degree Centrality

The pursuit of identifying valuable links for achieving competitive parity commences with an analysis of the top-ranking websites. Manually sifting through numerous backlink reports from Ahrefs can prove to be a laborious endeavor. Furthermore, delegating this task to a virtual assistant or team member may result in a backlog of ongoing responsibilities.

Ahrefs offers users the ability to input up to 10 competitors into their link intersect tool, which I consider the premier tool for link intelligence. This tool allows users to streamline their analysis, provided they are comfortable with its depth.

As previously mentioned, our focus is on expanding our reach beyond the conventional list of links that other SEOs are targeting to achieve parity with the top-ranking websites. This strategic approach provides us with a competitive edge during the early planning phases as we work to influence the SERPs.

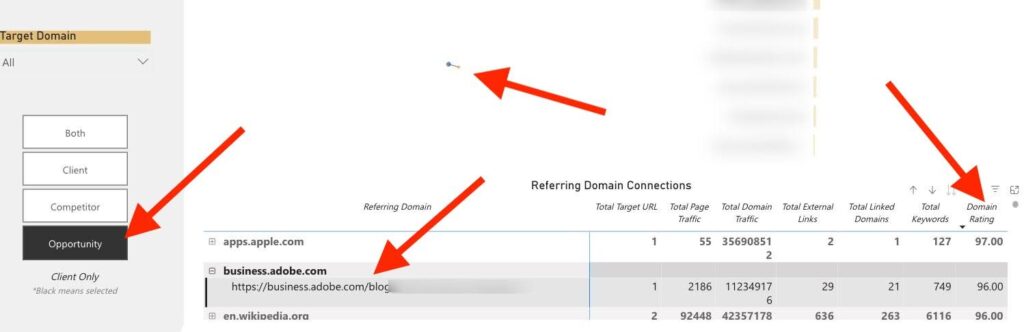

Consequently, we implement various filters within our SERP Ecosystem to identify “opportunities,” defined as links that our competitors possess but we do not.

This method allows us to rapidly identify orphaned nodes within the network graph. By sorting the table by Domain Rating (DR)—though I’m not particularly fond of third-party metrics, they can be valuable for quickly identifying potent links—we can unearth powerful links to incorporate into our outreach workbook.

3. Streamline and Optimize Your Data Pipelines Effectively

This strategy facilitates the effortless addition of new competitors and their integration into our network graphs. Once your SERP ecosystem is established, expanding it becomes a fluid process. You can also eliminate unwanted spam links, merge data from various related queries, and maintain a more comprehensive database of backlinks.

Effectively organizing and filtering your data serves as the initial step toward producing scalable outputs. This meticulous attention to detail can uncover countless new opportunities that might have otherwise gone unnoticed.

Transforming data and creating internal automations, while introducing additional layers of analysis, can foster the development of innovative concepts and strategies. Personalizing this process will reveal numerous applications for such a setup, far exceeding what can be addressed in this article.

4. Identify Mini Authority Websites Using Eigenvector Centrality

In the field of graph theory, eigenvector centrality posits that nodes (websites) gain significance as they connect to other important nodes. The greater the importance of the neighboring nodes, the higher the perceived value of the node itself.

This may not be beginner-friendly, but once the data is organized within your system, scripting to uncover these valuable links becomes a straightforward task, and even AI can assist you in this process.

5. Backlink Analysis: Utilizing Disproportionate Competitor Link Distributions

While the concept may not be groundbreaking, examining 50-100 websites in the SERP and pinpointing the pages that receive the most links is an effective strategy for extracting valuable insights.

We can focus solely on “top linked pages” on a site, but this method often yields limited information, especially for well-optimized websites. Typically, you will notice a few links directed towards the homepage and the primary service or location pages.

The ideal strategy involves targeting pages with a disproportionate number of links. To achieve this programmatically, you will need to filter these opportunities using applied mathematics, with the specific methodology left to your discretion. This task can be challenging, as the threshold for outlier backlinks can vary widely based on the overall link volume—for instance, a 20% concentration of links on a site with only 100 links versus one with 10 million links represents a significantly different scenario.

For example, if a single page attracts 2 million links while hundreds or thousands of other pages collectively gather the remaining 8 million, it indicates that we should reverse-engineer that specific page. Was it a viral sensation? Does it offer a valuable tool or resource? There must be a compelling reason for the influx of links.

Backlink Analysis: Understanding Unflagged Scores

With this valuable data, you can initiate an investigation into why certain competitors are acquiring unusual amounts of links to specific pages on their site. Utilize this understanding to inspire the creation of content, resources, and tools that users are likely to link to.

The potential of data is vast, justifying the investment of time in developing a process to analyze larger sets of link data. The opportunities available to you for capitalizing on are virtually limitless.

Backlink Analysis: Your Comprehensive Step-by-Step Guide to Crafting an Effective Link Plan

Your initial step in this process involves gathering backlink data. We highly recommend utilizing Ahrefs due to its consistently superior data quality compared to competing tools. However, if feasible, blending data from multiple platforms can further enhance your analysis.

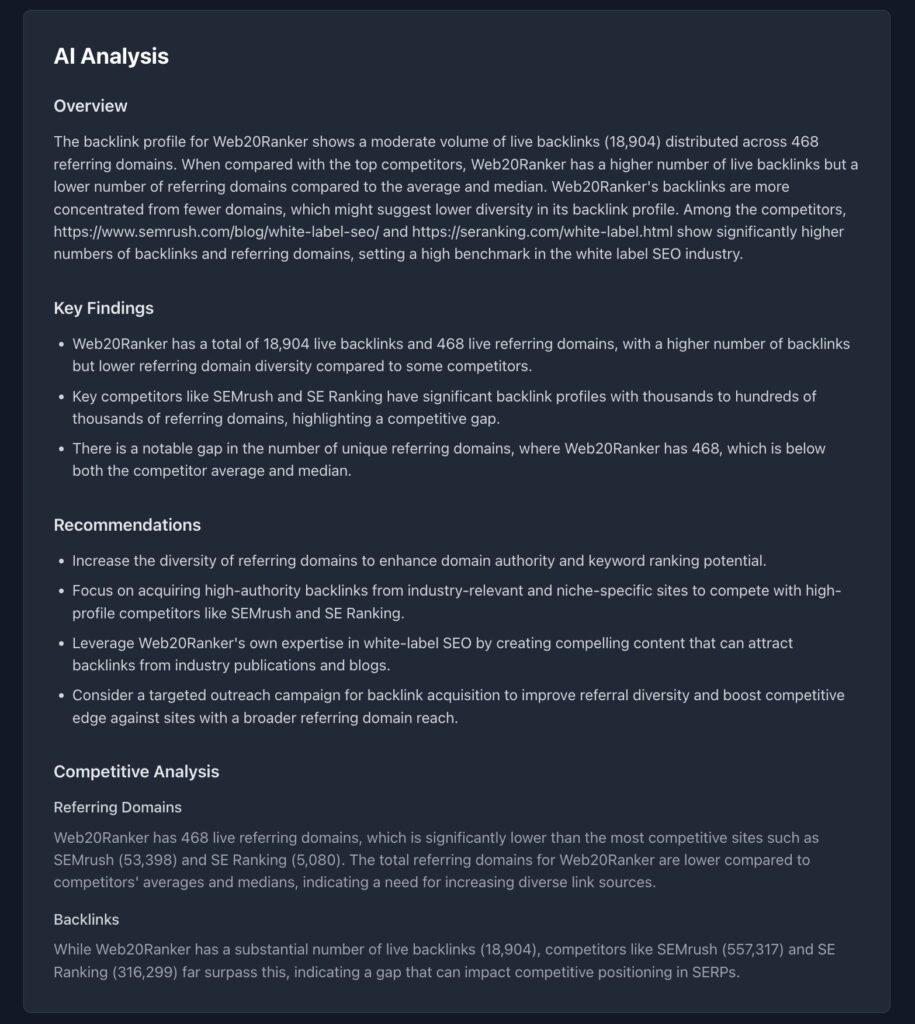

Our link gap tool serves as an exceptional solution. Simply input your site, and you will receive all the critical information:

- Visualizations of link metrics

- URL-level distribution analysis (both live and total)

- Domain-level distribution analysis (both live and total)

- AI analysis for deeper insights

Map out the exact links you’re missing—this focus will help close the gap and strengthen your backlink profile with minimal guesswork. Our link gap report provides more than just graphical data; it also includes an AI analysis, offering an overview, key findings, competitive analysis, and link recommendations.

It’s common to uncover unique links on one platform that aren’t available on others; however, consider your budget and your capacity to process the data into a cohesive format.

Next, you will need a data visualization tool. There’s no shortage of options available to help you achieve our objective. Here are a few resources to assist you in selecting one:

The article Backlink Analysis: A Data-Driven Strategy for Effective Link Plans was found on https://limitsofstrategy.com